Reassessing My “Works on My Machine” Certificate

I recently remembered that about 13 years ago I was fully certified with the “Works on My Machine” certificate program. Although I went through the entire evaluation process as was required by Joseph Cooney in this blog of his, to be honest, I didn’t quite like how his certificate looked. So, I decided to go the extra mile – well, really, the extra few steps- in order to get the revised certificate issued by Jeff Atwood’s version.

These are the steps I had to complete to achieve this shining moment:

- Compile your application code. Getting the latest version of any recent code changes from other developers is purely optional and not a requirement for certification.

- Launch the application or website that has just been compiled.

- Cause one code path in the code you’re checking in to be executed. The preferred way to do this is with ad-hoc manual testing of the simplest possible case for the feature in question. Omit this step if the code change was less than five lines, or if, in the developer’s professional opinion, the code change could not possibly result in an error.

- Check the code changes into your version control system.

Since that momentous occasion, I’ve issued numerous such certificates to my co-workers, employees, and, of course, to myself. I started managing Rookout’s R&D team about 9 months ago, but I was a bit baffled to realize that I didn’t have to issue any “Works on My Machine” certificate to my employees yet. As I sat down to ponder this, I thought to myself: how come? How could it be that none of them have received it? Have none of my employees deserved this certificate?

There is no “My Machine”

When looking at our tech stack and architecture, and specifically in regards to the “machine”, a variety of challenges can be seen. In all cases, the “machine” isn’t enough, and there is always one other thing that’s missing to factor into the equation. This is true even when we have total control over our machines in all environments.

In our web application, we basically have the same challenges that all FrontEnd developers have: the elusive and vastly fragmented world of browsers. Ranging from mobile web views through Firefox, Safari, and Chrome – we meet them in all shapes and versions. Our functionality is pretty much supported on all browsers, but all of our CI/CD and automation is dedicated to Chrome (Chromium, to be exact). We think that this covers most cases and that asking our customers to use Chrome/Chromium for the perfect experience is a legitimate request.

Our web application might not look perfect on all browsers, but the core functionality fully works. Since we decided to dedicate our browser support to Chrome, we have been using the headless chromium tests using TestCafe for our complete sets of regression and end-to-end tests. Considering we’re no longer in 2007, replacing the “machine” is simply editing our Dockerfile and Jenkins will do the rest. Apart from testing and focusing on a specific browser, we of course use Babel for Polyfilling.

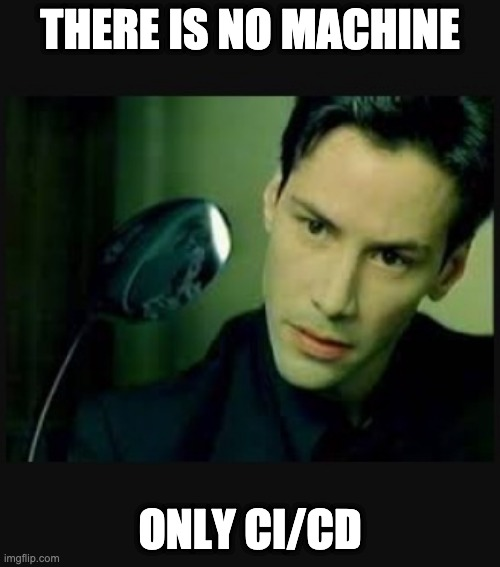

Works on that machine

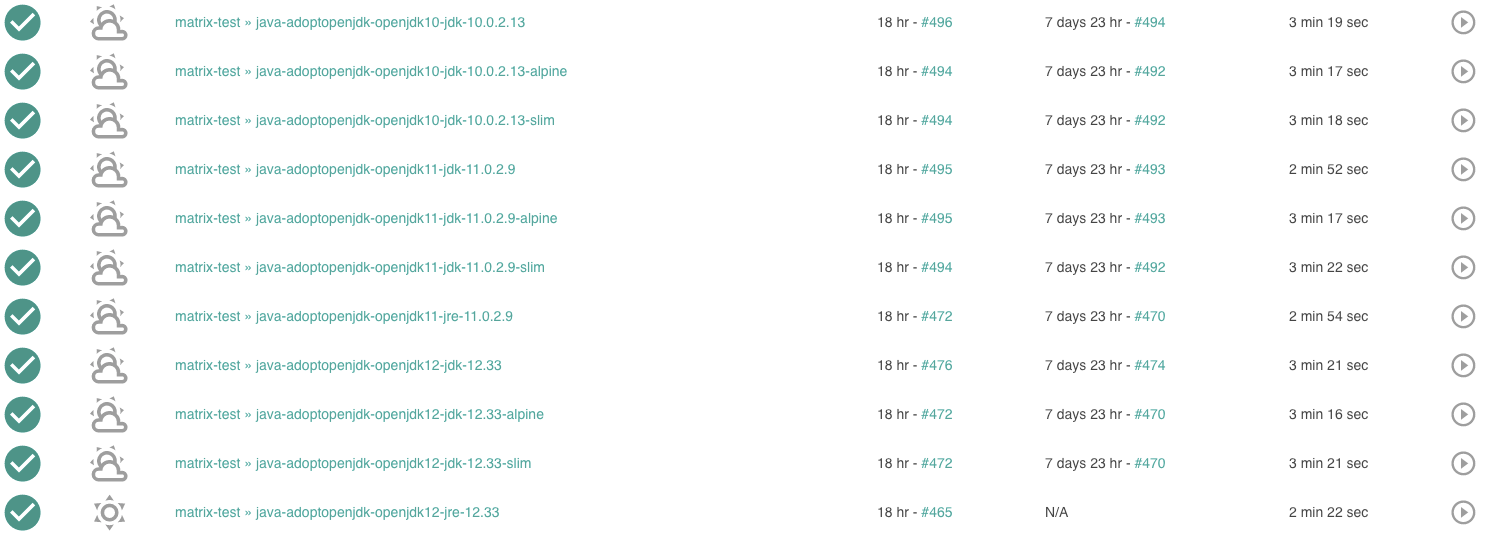

The area that looks as if it is the most complicated, with the biggest variety of machines, is our SDK. Rookout’s SDK supports Python, Java, Node.js, and .Net. Each of these languages has multiple versions, dialects, frameworks, and environments. If that didn’t add enough pressure, we have to completely test each one and make sure they don’t break every time we change anything (which, as we all know, is a very real fear).

To this extent, it seems that we can actually have a new sort of certificate program, which we can call: it “Works on That Machine”. Instead of only acquiring that one measly certificate that says you’ve tested it on your development machine, what we need to do in actuality is to stack up those certificates on everything you test out. We have our own test matrix which consists of testing out our SDKs on as many permutations as we can test. Looking at Java, for example, we take into account the permutations of the JVM runtime and version, the OS, and other different environment configurations.

Whenever a customer asks us whether we support his specific tech stack, we would go back to our wall of certificates and check whether we’ve earned that specific “Works on That Machine” certificate. In essence, we would basically keep working on issuing ourselves more and more certificates, similar to army veterans that look like they might fall over from the sheer weight of all those medals on their chest. And yes, that’s exactly what we anticipate our wall would look like.

Backend – Thinking you’re in control

Things start to get really weird when you look at your BackEnd. Since the world started working with VMs, containers, dockers, and all of those other types of technical wonders, you feel pretty much covered. Develop on your machine, shove it into your Dockerfile or whatever works for you, and – voila! – you’re testing and working on the same machine as in production. There are no “end users” or weird machines that your application is installed on. Then “Works on My Machine” is good enough here, right?

Wrong! It will definitely boot up properly and work fine when you are interacting with it and sending in the data that you are used to sending. However, you need to keep in mind that all hell breaks loose (no exaggeration, trust me) when real users start interacting with your machine. Your code and thus your application are merely “extras” in this movie and the main actor is the data. Whether it’s the data you weren’t expecting, or that that huge amount of data comes all at once, the fact remains. Data is the main actor.

Let’s be honest. Nobody cares if you have the “Works on My Machine” certificate. What you really need is that luxurious “Works with Real Data” certificate. This isn’t true only for the backend where you have control over your machines, but everywhere.

“Works with Real Data” certificate

In order to make yourself ready for the real world, you need to simulate the real world in advance. Seems simple enough? Here are a few ways to do just that:

- Manually create the data – use a demo environment that you know and can expect its behavior.

- Automate data generation – bombard your application with “random” or intelligently crafted data.

- Mass copy real data – copy data from your production database and use it in your testing environment.

All of these are valid methods to prepare your application for the real world. Using real data that’s been copied from production is “as good as the real thing”, but it’s not so recommended to do this as part of your routine. Your users’ real data is usually private data that must be guarded and shouldn’t be moved around. As they say, security first!

How do I get certified?

No matter what you do, and how you prepare yourself, you must interact with the real data in order to get your “Works with Real Data” certificate.

In my experience, I’ve found that the best way to do this is by working with real data. I have actually discussed this in a previous blog post about getting your code really fast into your production environment and seeing how it interacts with real data. You won’t -and shouldn’t -do it blindly. Of course, we use LaunchDarkly’s feature flags to do so gradually and carefully. Some companies, alternatively, also use multiple environments and have A/B testings on different production environments with different versions.

Once your application has handled a variety of real data (different users and varying scale), you will definitely be able to self-issue a “Works with Real Data” certificate. However, take note and keep in mind that you will always be surprised with new data that you’ve never seen before because sometimes it seems that the Spanish Inquisition uses your software.

I’ve been certified, now what?

So, you’ve been certified, congrats! You chill and watch your application behave, but something isn’t really working. Perhaps you see your application crash or maybe Bugsnag or OpsGenie is trying to get your attention to tell you that something is wrong. Usually, you will have some of that data collected, whether you’ve proactively collected it with your logs or your exception caught it. However, from our experience, that real data is very elusive, and you’ll always be missing out on what was the data that challenged your application.

This is exactly why we started Rookout. We believe that your application isn’t only code. Your application is the tight bond between your code and your user’s data. Our product allows you to collect real data from your application anywhere, anytime. With Rookout, you don’t have to plan ahead and worry about that unexpected data you didn’t future proof against. Sit back, relax, and enjoy that certification.