10 Dos and Don’ts When Debugging Cloud-Native Applications

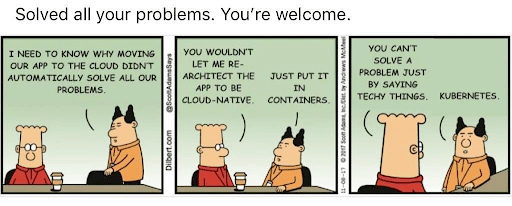

Lately, everyone has been jumping on the cloud transformation bandwagon, which isn’t surprising, because when it comes to tech, you don’t want to find yourself behind, stuck with your dusty old monoliths. Just kidding – we love ourselves some good old monolith architectures – but there’s no comparing to dynamic, cloud-native technology.

And while we could speak all day about the benefits of cloud-native and modern architectures like Kubernetes and AWS Lambda, we have to look the truth in the face. And that is this: as wonderful as they are, as much as they improve developer efficiency, speed up processes, and are dynamic – cloud-native applications raise complex challenges for the developers trying to debug them. And this is only made even more challenging when doing so in real time, in production environments.

But you’ve come to the right place! We put together a preliminary list of Dos and Don’ts to keep in mind as you debug your cloud-native applications.

Take a look.

#1 – Which version of code are you looking at?

Don’t: Assume you have the right version of the code. Cloud-native applications frequently change and code versions can vary across different deployments.

Do: Find a tool to make sure you’re looking at the right version of the code. If your IDE is showing a local branch or the most recent commit, there’s a good chance you’re looking at a different logical flow than the one running in production.

#2 – Stepping through code

Don’t: Assume you know where the problem is and rush in to “step over” functions. We’ve all done it – and how often are we actually right?

Do: Eliminate problem categories step by step. Do this by taking a step back to try to get a bird’s eye view of the entire cluster or environment. Then, try to track the debug flow and “step into” the code as much as possible.

#3 – Placing blame

Don’t: Blame a specific container or server. They need to be treated equally.

Do: Look at your containers as a group, not a unique unit. Remind yourself that each microservice is expected to run on dozens or hundreds of containers, to behave similarly, and multiple microservices interact with each other, so you may need to trace an issue across multiple services.

#4 – Reproducing Issues

Don’t: When an issue seems likely to originate in a large-scale and complex production environment, don’t try to reproduce it locally. Especially when network and data conditions can’t be replicated.

Do: Find efficient and safe ways to reproduce and isolate the issue where it normally occurs, meaning whichever environment the issue was reported in.

#5 – Logging FOMO

Don’t: Feeling FOMO over your logs will only add a hefty price tag to your overhead costs. For example, enabling DEBUG or TRACE logs across your entire application will increase your logging costs, impact your app performance, and generate a huge amount of unnecessary data you’ll have to search through.

Do: Implement logging best practices. Dynamically and granularly increase log verbosity and allow your developers to shine a light into the darkness of their code. Get just the data you need to troubleshoot, without overwhelming yourself or your app.

#6 – Real-Time Data

Don’t: Stay away from the awful cycle of adding a log line and then waiting for CI/CD to get you the data you’re missing. We’ve all done it. We all know you’ll be waiting quite a while.

Do: Get the debug data that you’re missing, in real-time, with the proper live debugging tools.

#7 – Work Together

Don’t: Hold everything to yourself – that includes debug flows and issues you’re facing or find. Someone else might have the answer (but yeah, try your rubber duck first).

Do: Collaborate and streamline new debug data across all teams, using your favorite tool.

#8 – Distributed and dynamic

Don’t: Use SSH and connect to a specific server. When working in distributed environments, especially with microservices, there’s no one server you connect to.

Do: Jump on the distributed bandwagon. Embrace distributed logging and tracing methods so you can collect data from distributed, dynamic microservices.

#9 – Changing code

Don’t: Interrupt the app you’re trying to troubleshoot by changing code, stopping your app, or restarting it.

Do: Access code-level data from a live environment while it’s still running. Find the tools that enable you to do so without compromising your performance or running code.

#10 – Debug cloud-native applications

Don’t: Debug alone or bang your head against your keyboard (seriously – it will only mess up your code).

Do: Consult your rubber duck, debug with a friend, or use a purple bird 😉

The TL;DR

When it comes to debugging cloud-native applications, and specifically doing so in real-time in production environments, we can face quite a few challenges. But that shouldn’t scare you off. Whether it’s making sure you’re using the proper tools, building the correct workflow, consulting the right people, or even implementing a few of our above recommendations, it will be a game-changer for your dev’s workflows.

Good luck – and happy debugging 😉