Developer Tools for Kubernetes in 2021: Docker, Kaniko, Buildpack & Jib (Part 4)

Over the last few blog posts, I have covered critical elements of developer tooling for Kubernetes and how things are looking in 2021. As we continue to dive into that discussion, we must not forget the process of building container images.

Of course, most of us create our images by writing Dockerfiles and building them with the Docker engine. And yet, more and more teams are adopting newer alternatives. After all, the Docker image format has been standardized as part of the OCI (Open Container Initiative) a long while ago.

In this blog post, I’ll be covering updates to the Docker engine, including BuildKit and Buildx (the CNCF Buildpacks project) and a few Google open-source alternatives such as Kaniko and Jib.

As you read through this post, it’s essential to keep in mind the distinction between the two main elements used to build a container image. The first element is the format we use to specify the container image – for instance, a Dockerfile. The second element is the engine we use to build the container image – for example, the Docker engine. Some of the tools we’ll cover in this post focus on only one of those elements, while others offer both.

Docker

Since selling off its enterprise business to Mirantis, Docker has been focused on developer tooling around containers, first and foremost the Docker Desktop application. In late 2020 Docker released their 2.4.0.0 version of the application, which (finally!) migrated the image building experience to the BuildKit, which had up until then been an experimental project.

Some of the highlights of the BuildKit engine for us as Docker end-users include:

- Faster build performance.

- Remote caching of build steps. Caching happens in the form of image layers.

- Two new CLI flags added: –secret and –ssh.

If you are looking to dive even deeper into BuildKit and its advanced features to optimize build processes performance and security, you should check the Docker CLI build plugin.

The container images we are building using Docker are specified using Dockerfiles. The Dockerfile format offers a straightforward and procedural approach to image building. It has a relatively gentle learning curve, easy to get started with, and not too tricky to master. The Docker multi-stage builds (which have been available since 2017) provided us with even more granular control to separate the temporary build and test images from the final runtime image. Using a multi-stage Dockerfile, we can use a plethora of minimal runtime images such as distroless.

Buildpacks

Buildpacks (not to be confused with BuildKit) is a CNCF project bringing a more structured image building approach. This additional structure brings with it a better separation of concerns, but unfortunately results in additional complexity.

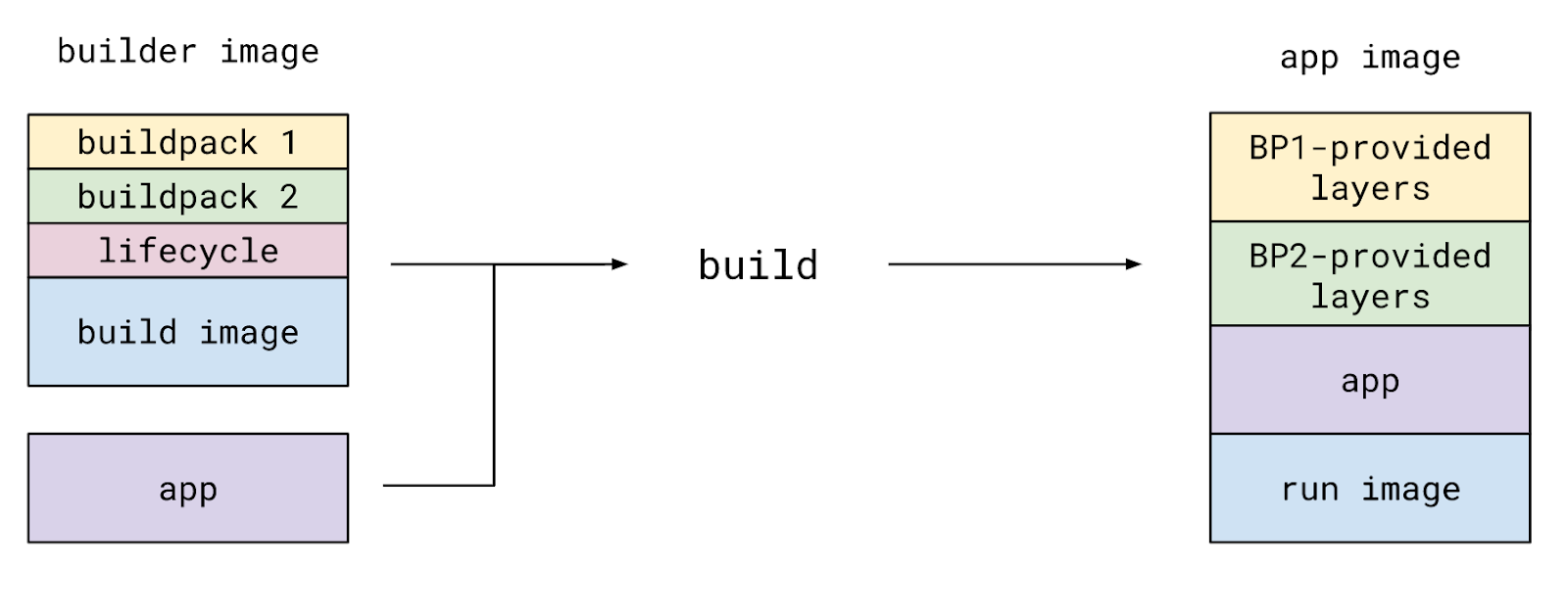

You can think of the Buildpack as a two-stage Dockerfile. You start by selecting the base image for the first stage, known as the build image in Buildpacks. You then choose one or more build and configuration tools such as Maven or Webpack to build your application, known as buildpacks. Finally, you select the base image for the second stage Dockerfile, known as the run image.

During the build process, the Buildpack engine spins up the build image in a container, executes the relevant buildpacks one by one, and then overlays their outputs on top of the run image, creating a ready-to-use application image. Here’s what it looks like on the Buildpacks’ official docs:

This structured separation of concerns has several benefits, some of which are:

- The organization can define a set of applicable build images, buildpacks, and run images. Each of those can be defined and maintained separately.

- This modular design enables the reusability of components, both within the organization and the open-source community.

- The application image operating system and runtime environment can change without rebuilding the image. This operation is known as a rebase.

If this separation of concerns is a high priority for your team, I recommend checking out buildpacks (please note the pack CLI depends on Docker). As for the rest of us, we might want to wait for this promising project to become a bit easier to use.

Jib

If you are a JVM developer and a structured build process for your container application has caught your attention, Jib might be just up your alley. This Google open source project offers Gradle and Maven plugins to build container images from your favorite build tools. As a bonus, Jib is self-contained and does not require the Docker engine.

For a similar but language-agnostic approach, check out Bazel’s rules for building container images.

Docker-Free Building

At the very end of 2020, the Kubernetes team has deprecated Docker as a container runtime in favor of other container runtimes. For most of us mere mortals, as users of the Kubernetes ecosystem, this does not have any implications.

You might be thinking of leaving Docker behind as well. Maybe the client-daemon architecture doesn’t work well in your use-case, or perhaps you need better rootless support. If that’s the case, then there are a few alternatives to check out.

If you are looking to run your container builder within a container, you should take a look at

Kaniko, This open-source project by Google offers a pre-built container image used to build new container images. Google originally developed Kaniko to run in a Kubernetes cluster, but you can deploy it to Docker and other container environments as well.

If you are a Linux user, you can check out Podman, an open-source daemonless container engine. Podman aims to be fully compatible with Docker, or as they like to put it, alias docker=podman.

Summary

As for software engineers working in the Kubernetes ecosystem, our work products are container images more often than not. And while those images are a great way to ship software (if you haven’t done so, check out Solomon Hykes’ fantastic talk), building high-quality images is a non-trivial task.

And yet, we don’t always want every team member to become a Docker and Linux expert, understanding the intricacies of building those production-grade images. The challenge is even more significant in larger organizations, where different stakeholders are in charge of defending and enforcing various policies regarding security, performance, and standardization.

Maybe in 2021, we’ll finally see the structured approaches to container building mature, providing a comparable, or even better, user experience to the traditional Dockerfile.

Check out the full Tools For Kubernetes Series:

Part 1: Helm, Kustomize & Skaffold

Part 2: Skaffold, Tilt & Garden

Part 3: Lens, VSCode, IntelliJ & Gitpod

Part 5: Development Machines